Case 03 · Focus Agents · Solo Founder · Feb–Mar 2026

I built it. Users loved it. I shut it down anyway.

Focus Agents was a multi-agent platform that turned a researcher's 26-hour synthesis job

into 33 minutes. Solo built. Production-ready in six weeks. Six PMs and product designers

on a free Pro tier in closed beta. By every standard metric, it was working. So why did I

stop? Because I watched what they were actually doing with it. And I couldn't unsee it.

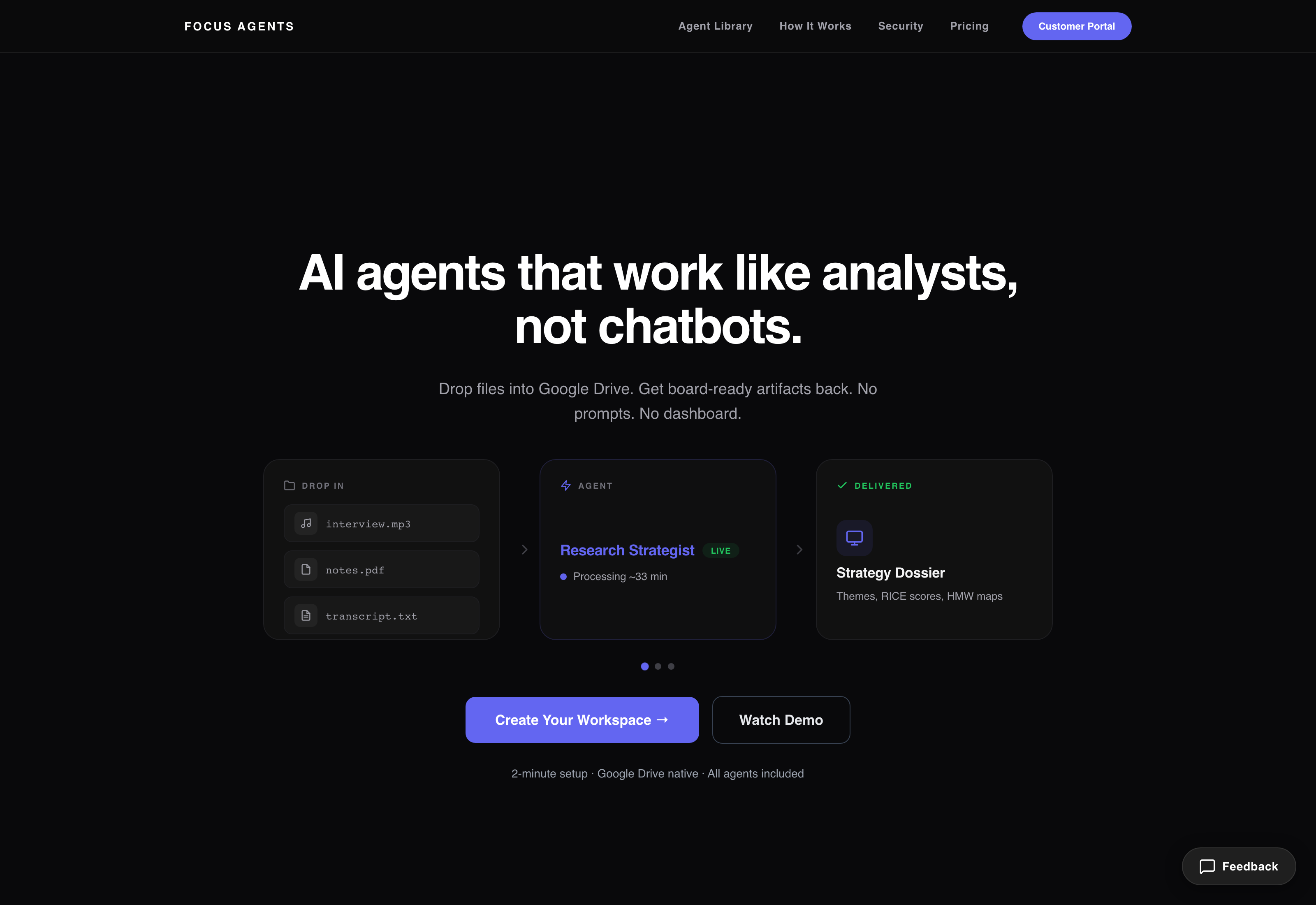

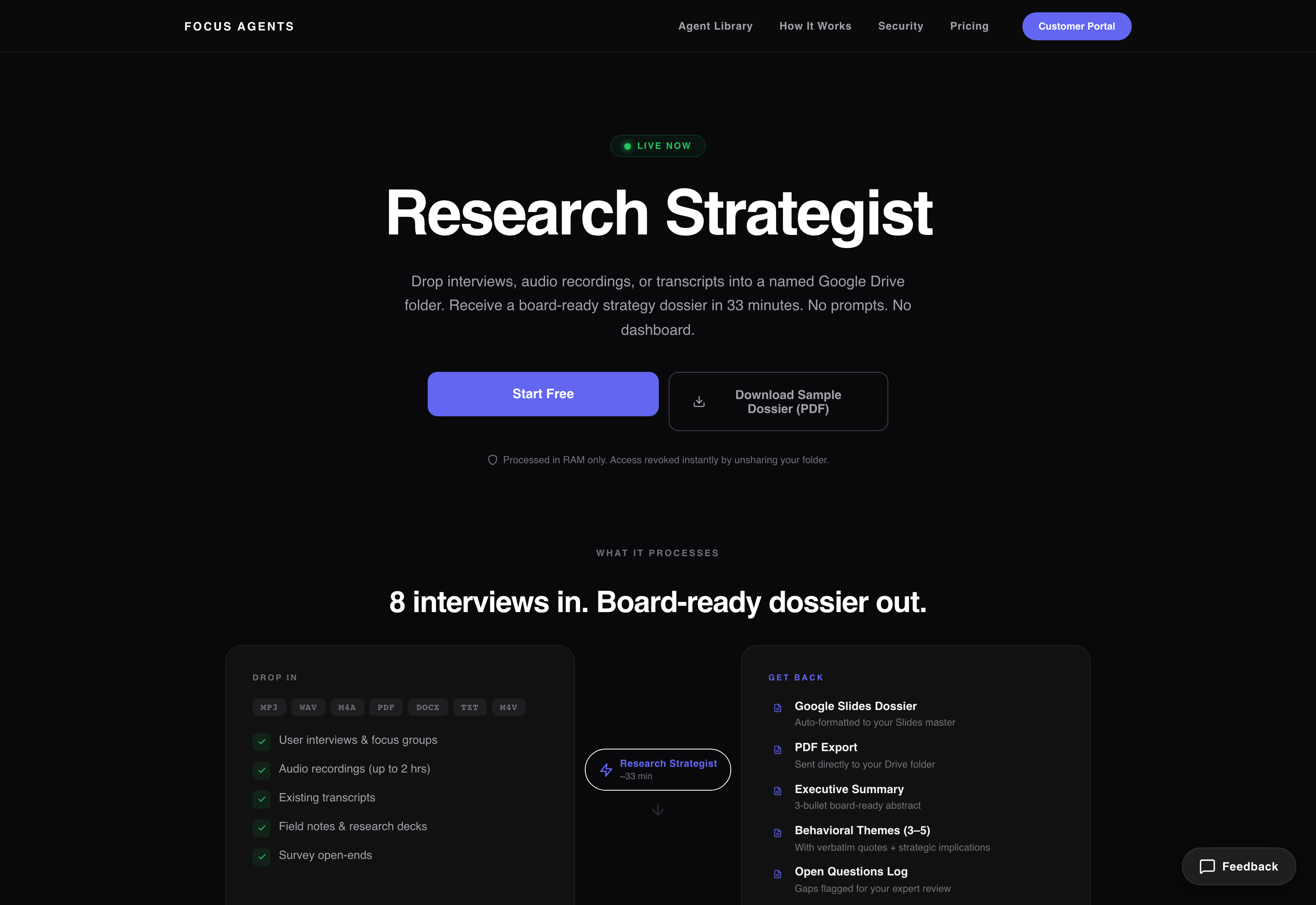

focusagents.io — drop files into Google Drive, get board-ready artifacts back. No prompts. No dashboard.

What it actually did

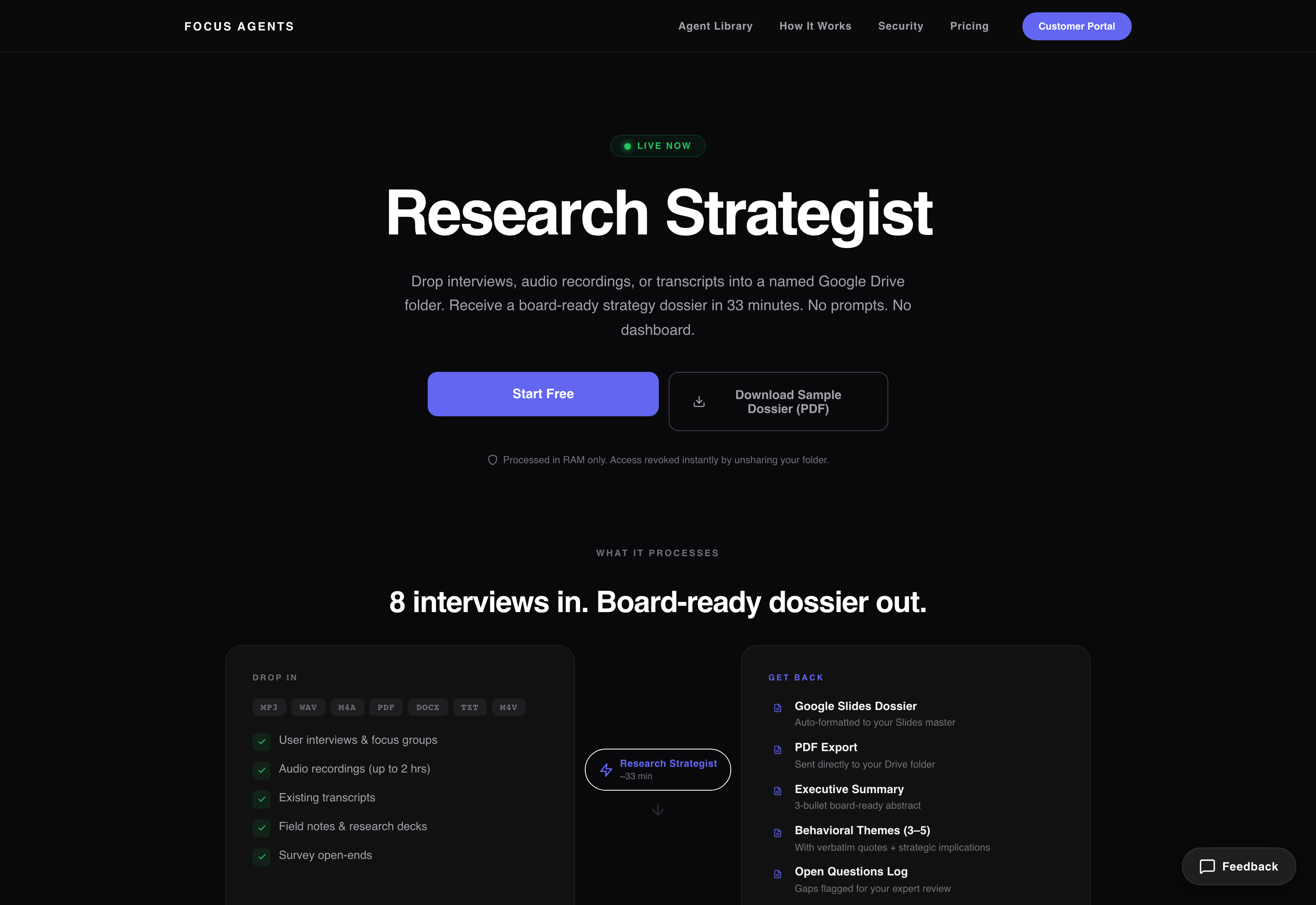

Three agents, all running production. The Research Strategist took

interview transcripts and audio and turned them into a four-artifact strategy package — a

Google Slides dossier, an executive summary, a quote library, a how-might-we doc. The

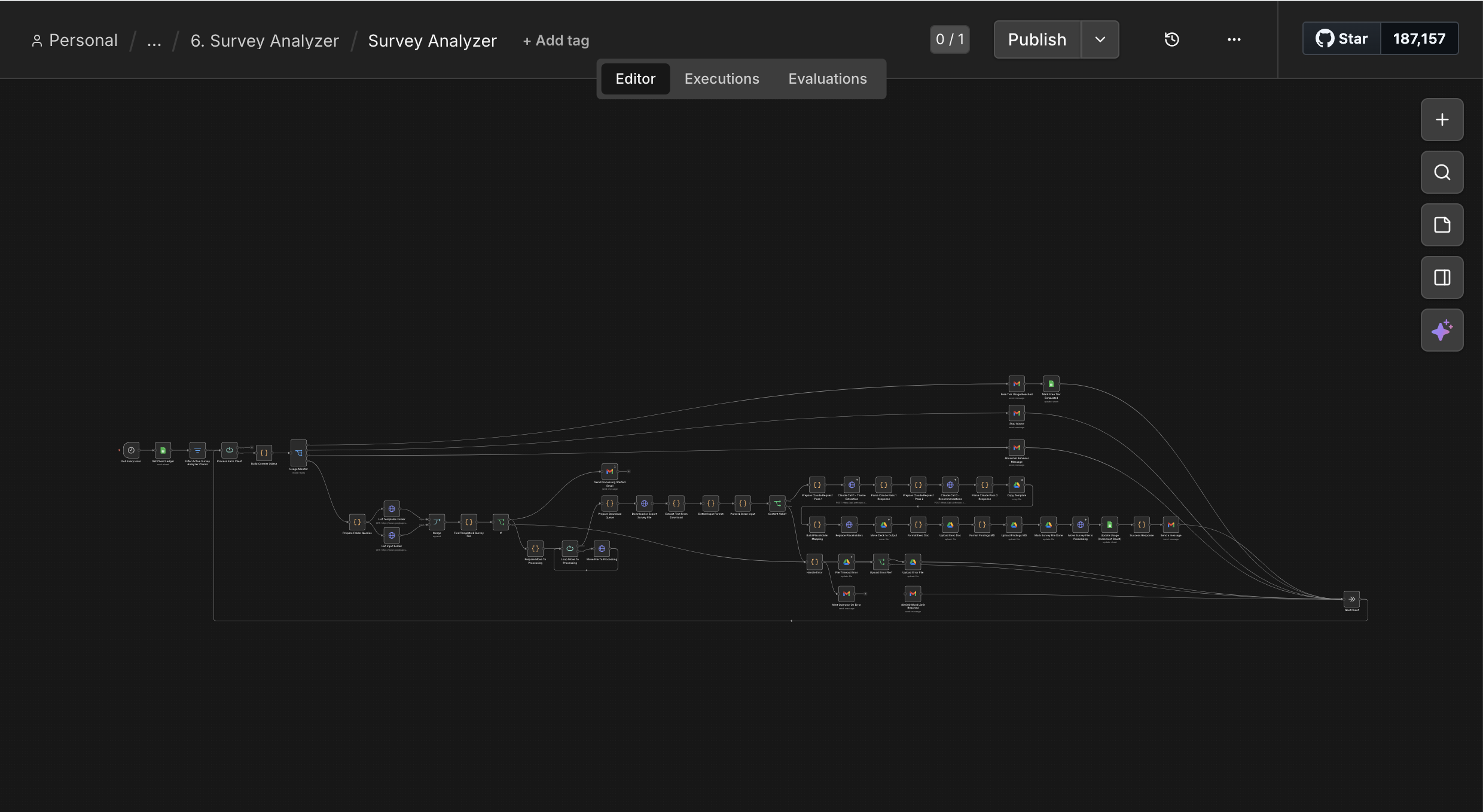

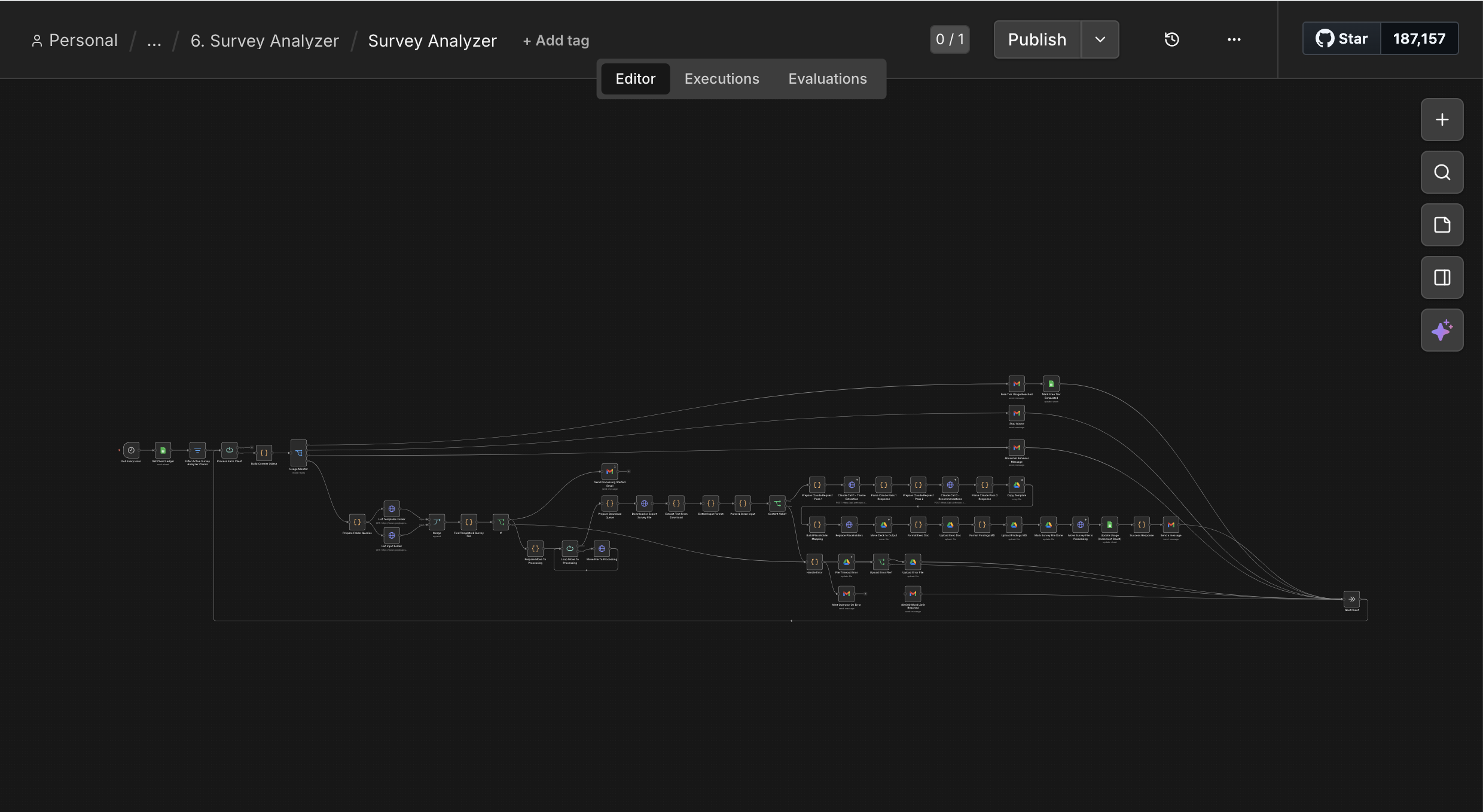

Survey Analyzer ate CSVs from Typeform, Qualtrics, or Google Forms and

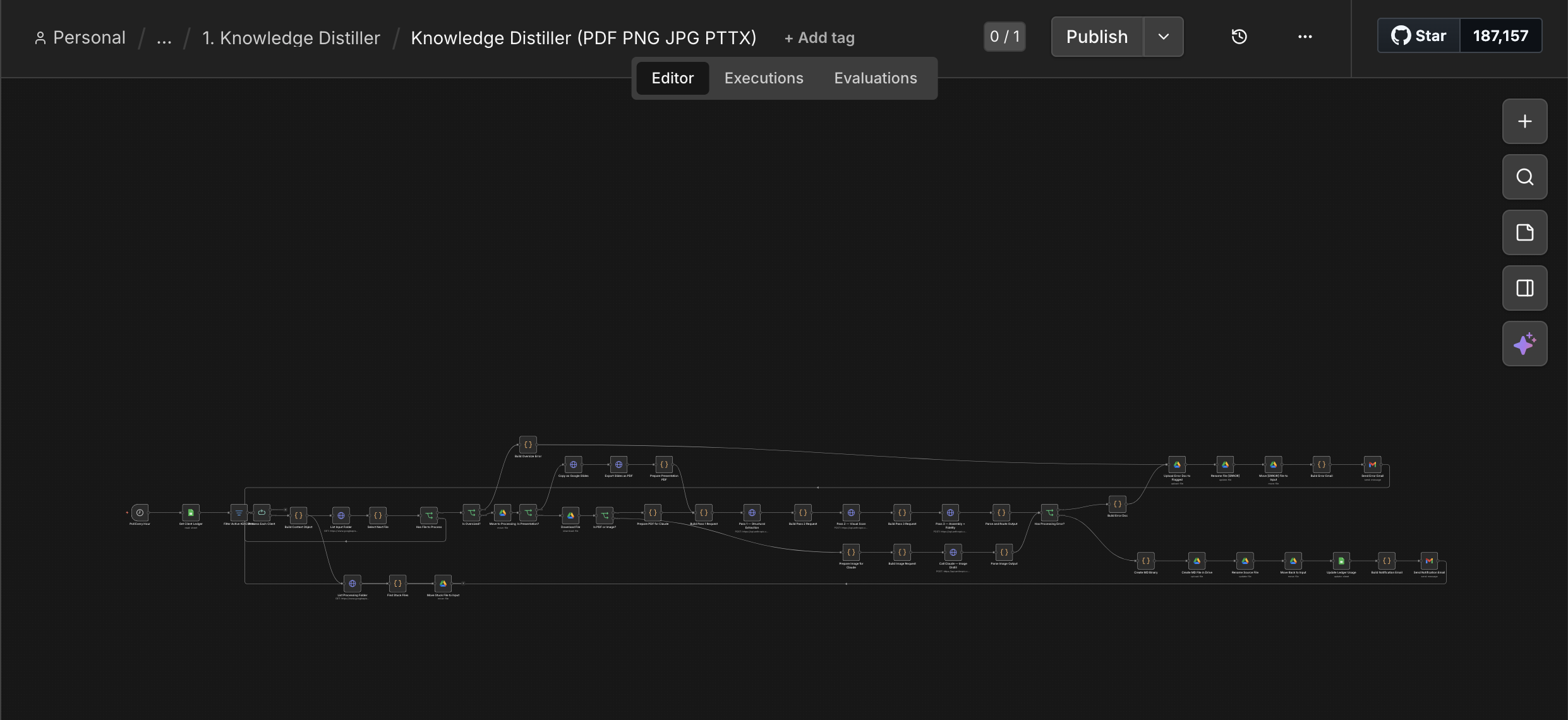

produced a findings deck with PII automatically stripped. The Knowledge Distiller

converted PDFs and slides into high-fidelity markdown for AI knowledge vaults. All three

scored 4.2–4.5 out of 5 on a 12-dimension quality rubric I ran on every job.

Research Strategist — drop 8 interviews in Google Drive, get a board-ready dossier in 33 minutes. The "no prompts, no dashboard" promise was the whole point.

What it looked like under the hood

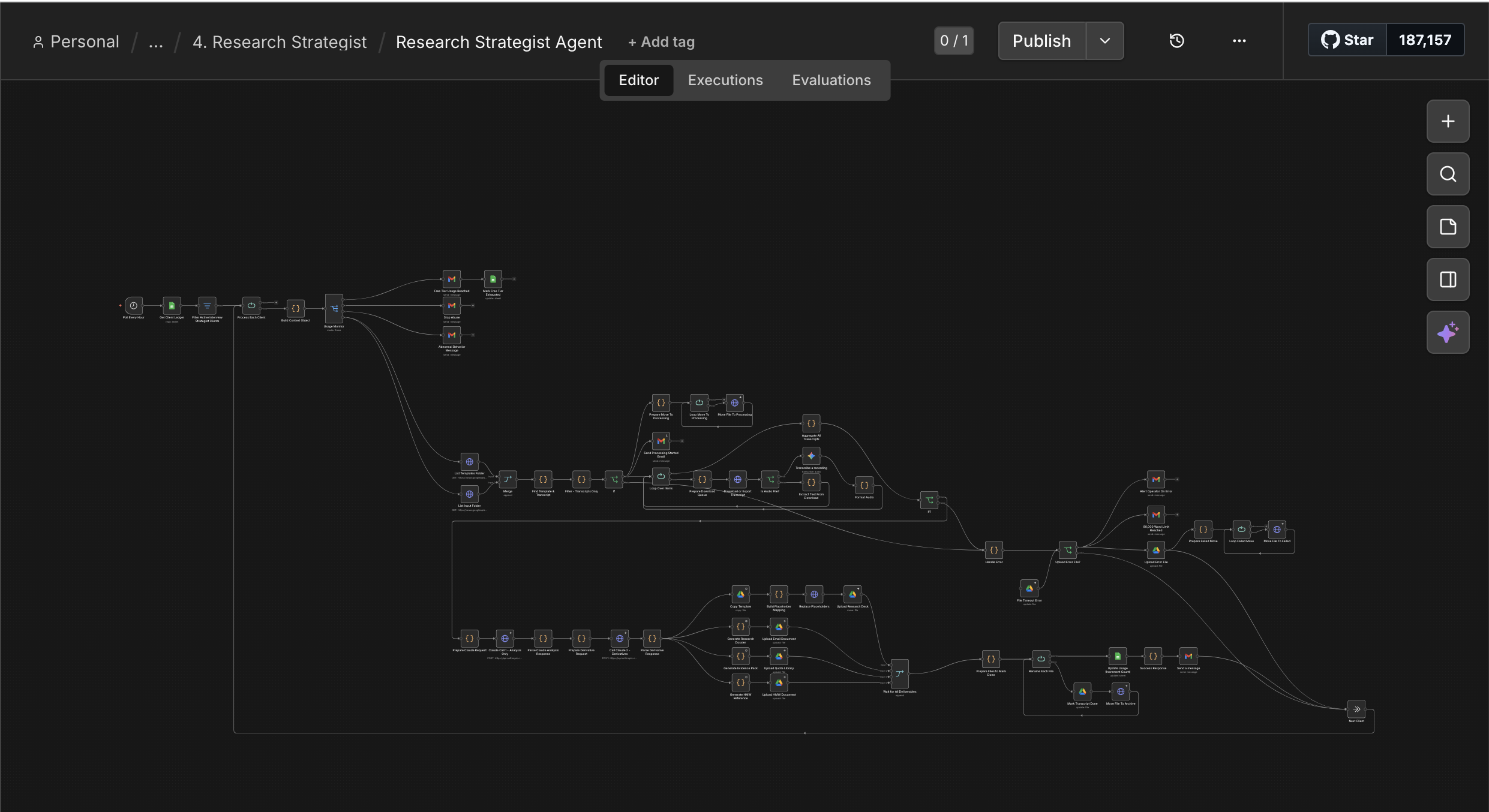

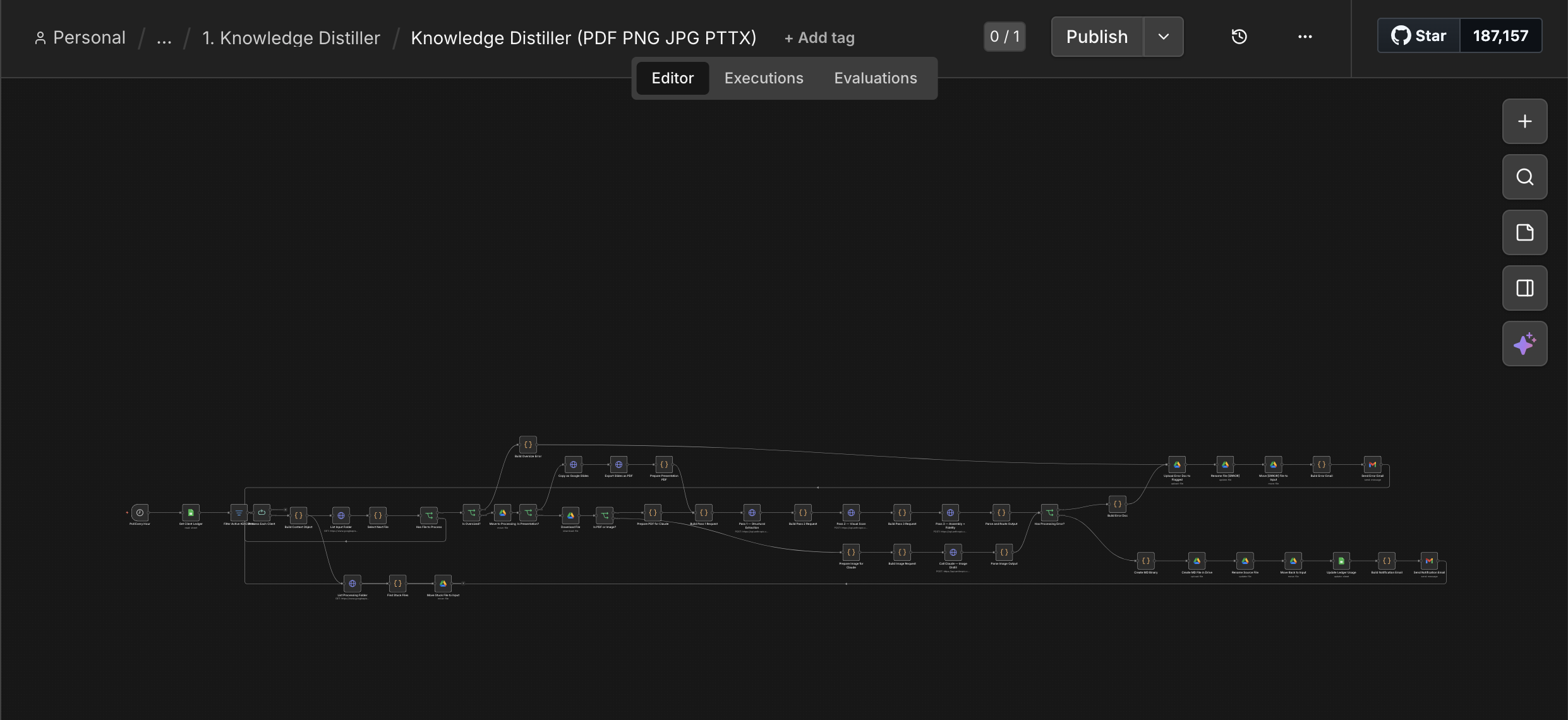

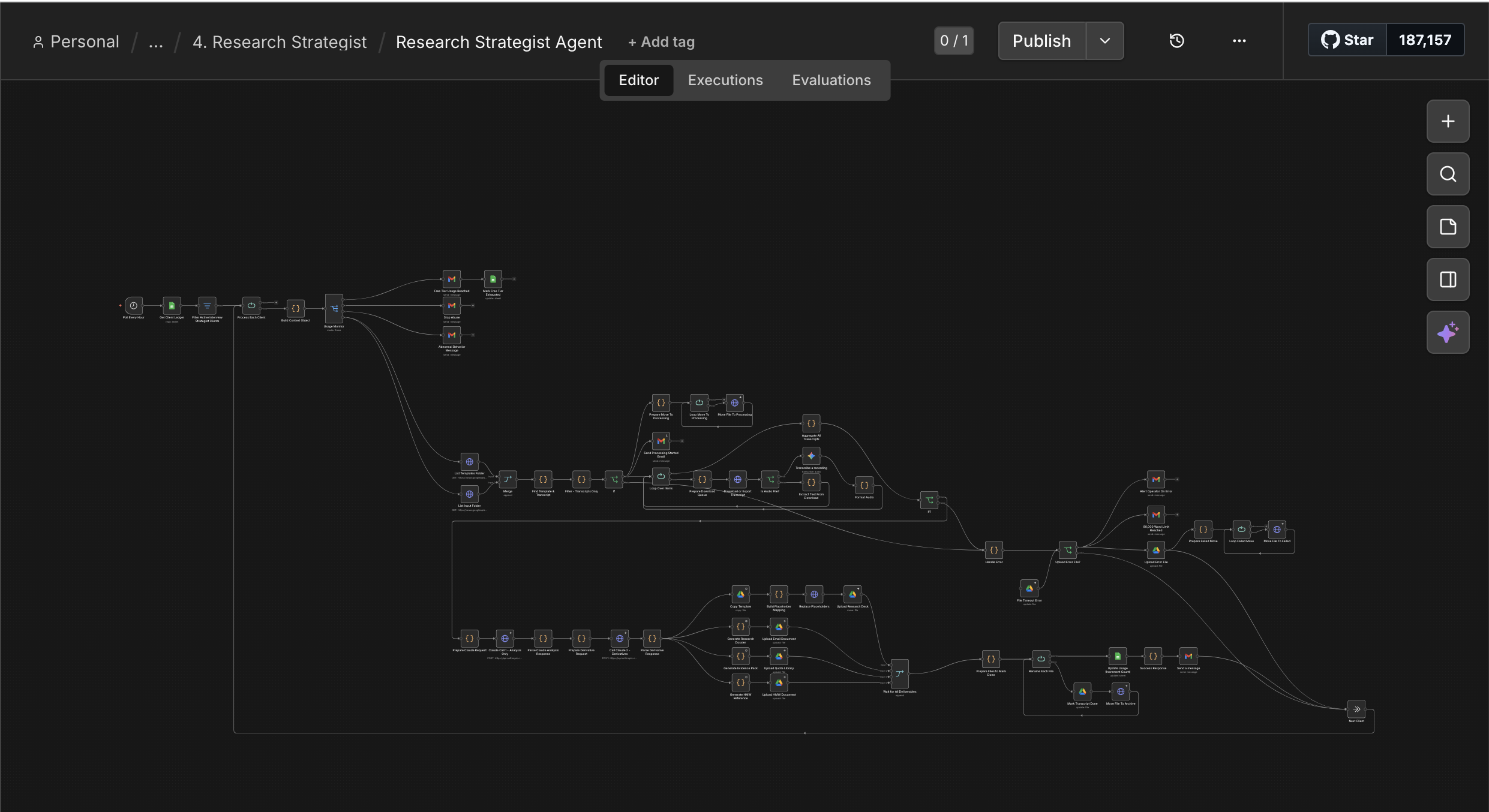

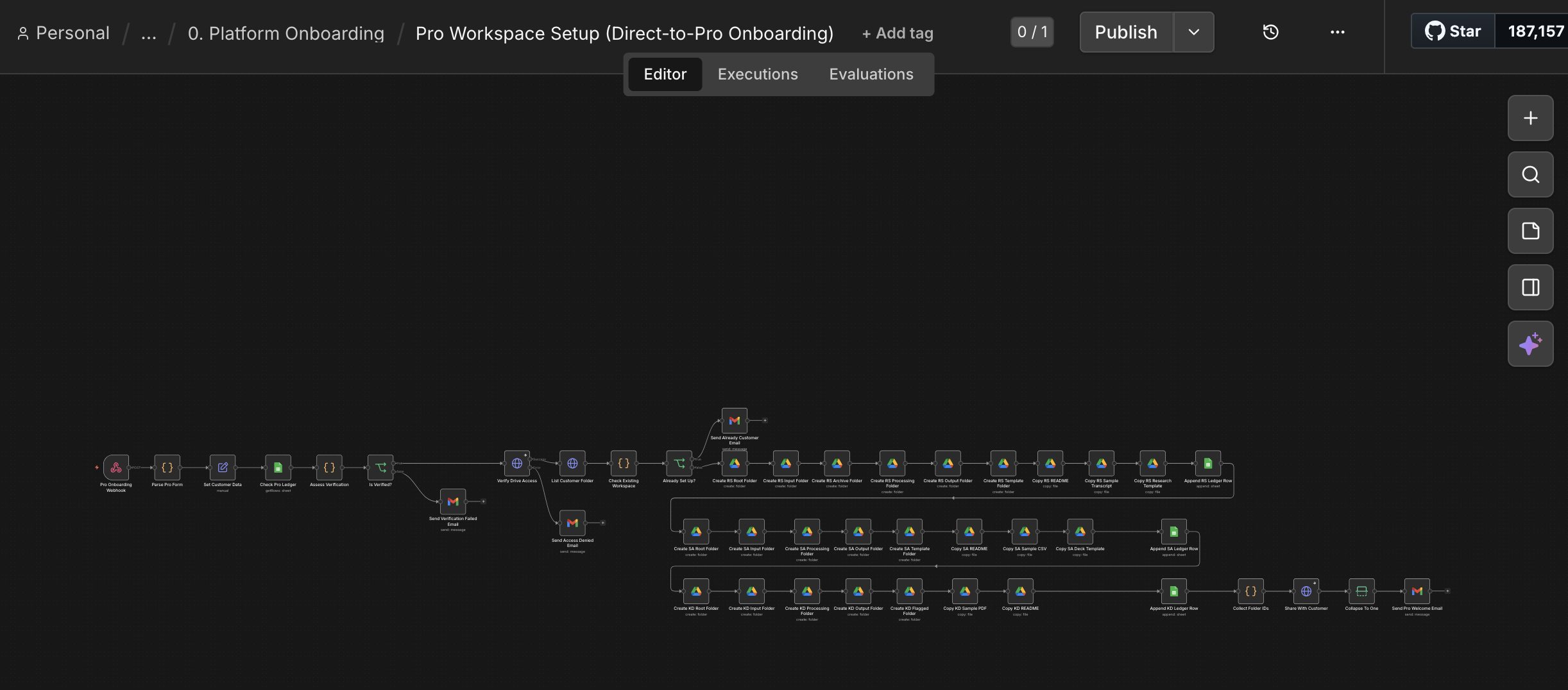

Each of those three agents is actually a multi-step pipeline. Below are the live n8n

workflows — every node is a step (parse, classify, route, call Claude, structure the

output, write to Drive). The squiggly mess is the point: this isn't one prompt, it's a

chain of dozens of decisions that turn raw input into something you'd actually hand to

a board.

Research Strategist — the agent that turned a 26-hour synthesis job into 33 minutes. Each node is a Claude call, a parser, or a routing decision.

Survey Analyzer — handles mixed-methods data (open-ended + multiple-choice) and strips PII before any of it reaches the model.

Knowledge Distiller — three-pass Claude pipeline that pulls structure, then visual elements, then assembles a fidelity-scored markdown output.

And the platform around it

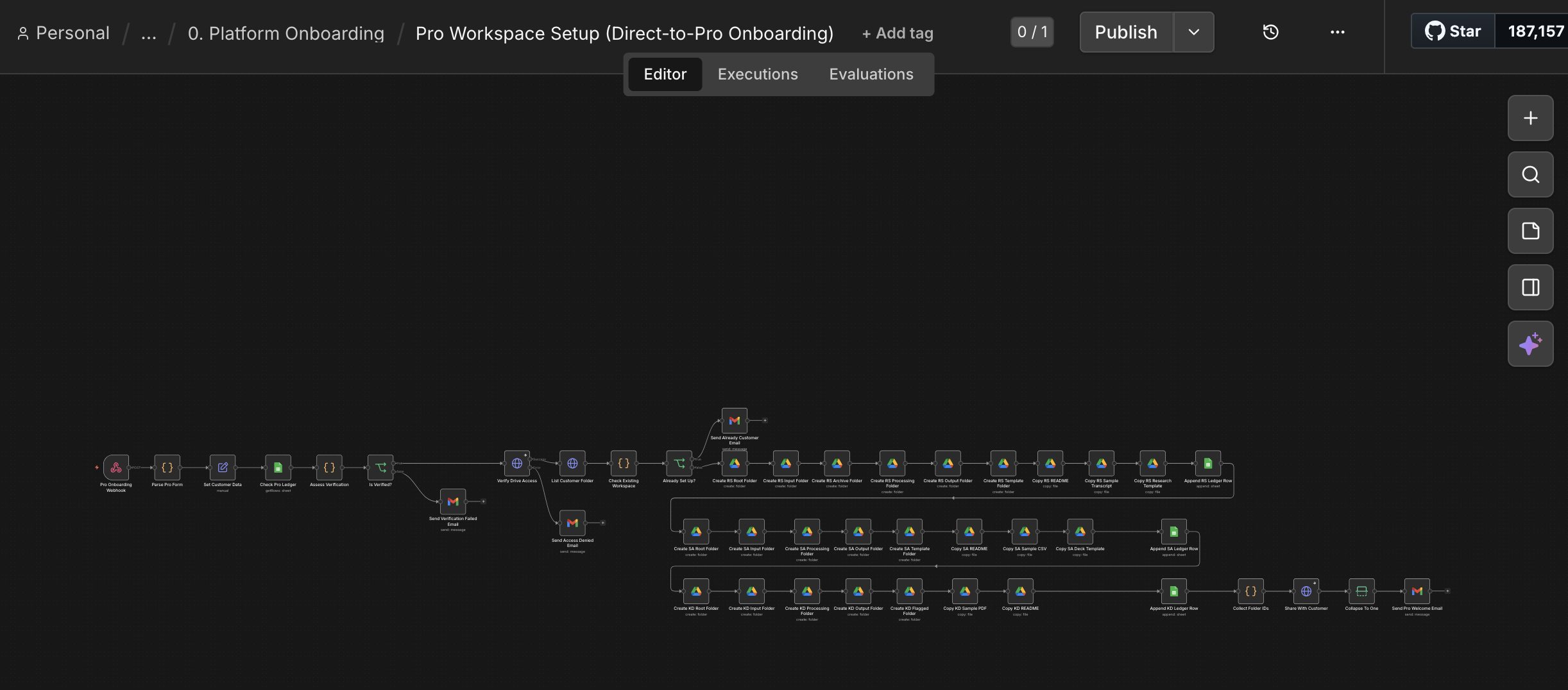

Three agents alone don't make a product — you also need onboarding, billing, usage limits,

and the boring stuff that turns a script into a SaaS. I built that too. Stripe-integrated

Pro onboarding, Tally-driven free-tier flow, and a 4-branch usage monitor that decided

whether each new job was OK to run, abuse, an anomaly, or hitting a free limit.

Pro tier onboarding — a Stripe webhook fires, the agent provisions a workspace, drops in templates and sample files, and sends the welcome email. About thirty seconds, end to end.

What I learned in the beta

The platform worked. The agents worked. The users — exactly my target ICP — also worked,

sort of. They used it to skip the thinking entirely. They didn't engage with the data. They

didn't read the dossiers. They forwarded them. The faster I made the synthesis, the less

anyone did the synthesis.

I'd written an Empathy & Ethics framework before launch — an architecture-level constraint

that said, in essence, this product should augment researchers, not replace the work that

makes them researchers. I'd built guardrails into the prompts. Human-in-the-loop. Output

traceability. The whole architecture was designed to make empathetic use the default. None

of it survived contact with the economics. Once "save 26 hours" was on the table, "engage

with what your users said" was always going to lose.

"I had users, and what I observed made me unwilling to scale the pattern."

— Why I stopped, in one sentence

26 hours → 33 minutes 47× speedup, in production

4.2–4.5 / 5.0 quality across 12 dimensions

~16,900 lines orchestrated end-to-end (architecture, prompts, integration)

Six weeks from idea to shipping

What this story is about

I'm including this case study because it tells you three things at once. One:

I can solo-ship a production multi-agent platform in six weeks — full Stripe billing, stateless

scaling, the works. Two: I notice ethical problems before the press

release, not after. Three: when principle and revenue conflict, I pick

principle. That third one is rare in AI right now. It's the exact thing AI-native companies

say they want in leadership. Some of them actually mean it.